It’s late December and I am winding down with 2021, which was pretty much 2020 too, while looking skeptical into actual 2022.

I will come up with a personal review after I am done with the #Rapha500 and will focus here on what I found out to be great in 2021 work wise (aka programming Java and database related things).

Spring Data Neo4j 6

Spring Data Neo4j 6 6.0.0 was actually released October 2020, super-seeding SDN5+OGM. The project started out as early as 2019 as SDN/RX and we at Neo4j had big ambitions to create a worthy successor. We in this case are Gerrit Meier and me.

I think we did succeed in many terms: We managed to get on the Reactive-Hypetrain with SDN 6. Something that would not have been possible with Neo4j-OGM, which basically tries to recreate a subgraph from the Neo4j database on the client side just before mapping. That subgraph creation did not play nicely with a reactive flow, so we needed to come up with something else and focussed on individual records to be mapped.

And that came with a couple of issues: We thought we knew everything that customers and users had been throwing at Neo4j-OGM over the years, but boy… You’ll never stop learning. And adding insult to injury: While we had a really long beta period with SDN/RX, a long enough warning that SDN 6 would be a migration and not an upgrade and also had betas there, 2021 started with… surprised users. Of course.

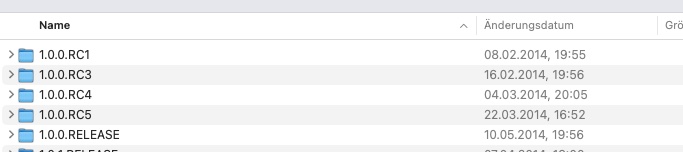

Until then we 26 releases of Spring Data Neo4j 6 this year: 7 patches for 6.0, 5 milestones and 1 RC for 6.1, 6.1 itself followed by 7 patches again, 3 milestones of 6.2, one RC and eventually 6.2 last month.

A big thank you to Mark Paluch who not only gave us so much invaluable feedback in that time, but also ran most of the releases.

No more zoom talks…

I tried to give a talk with the following abstract twice this year:

2014, the reactive manifesto has been written: A pledge to make systems responsive, resilient, elastic and message driven. Two years later, in 2016, reactive programming started to go mainstream, with the rise of Spring Webflux and Project Reactor. Another two years later, many NoSQL databases provide reactive database access. Neo4j, a transactional Graph database, didn’t have a solution back than, but we started a big cross team effort to provide reactive database access to Neo4j. It landed in 2019: A reactive stack, right from the query execution engine, to the transport layer and the driver (Client connection) at the other end.

But that left the team working at Neo4j-OGM and Spring Data Neo4j out: What todo with an Object mapper that had been deeply inspired by Hibernate and was working on a fully hydrated subgraph client side?

Well, we did what many developers did: Just let us rewrite the thing, take some inspiration from Spring Data JDBC and also from modern approaches to querying databases like jOOQ and be done with it.

While we did manage to make a lot of new users happy, we didn’t expect so many tickets from old users. They where not complaining about changed annotations or configuration, but more about that we removed things we considered drawbacks of the old system but had been features people actually used.

If In-Person conferences should ever happen again, I am inclined to actually do this at some point. However, not remote. I am done with Zoom-talks, I just can any more…

TL;DR

Right now, SDN 6.2 is in an excellent shape. We have been able to iron out all outstanding big issues, made our ideas clearer and also added brand new things: Like GraphQL support via Query-DSL integration, a much improved Cypher-DSL, the most feature rich projection mechanism of all Spring Data projects (which got even back-ported into Spring Data Commons) and all that by only being in the same room once in 2021 (albeit, for BBQ).

I am really thankful to have colleagues and friends like Gerrit. It’s great when you can not only dream up things together, but also take care of issues later on.

Cypher-DSL

In mid 2020, Michael Hunger gave us the neo4j-contrib/cypher-dsl repository and coordinates to be used to our rebooted Cypher-DSL. We extracted the Cypher-DSL from SDN/RX before it became SDN 6 as we thought tooling like this is valuable beyond an object mapping framework and we guessed right.

In 2021 we pushed out 23 releases: In the last incarnation we support building most statements that Neo4j 4.4 supports, both in a fluent fashion and in a more imperative way. The Cypher-DSL now provides a static type generator based on SDN 6 annotations as well as a Cypher-Parser. The parser module is probably the thing I am most proud of in the projects: It utilizes Neo4js own JavaCC based parser to read Cypher strings into the Cypher-DSL-AST, ready to be changed, validated or transformed.

The Cypher-DSL is used in SDN 6 as the main tooling to build queries but it also used in neo4j-graphql-java, a JVM based stack for transforming GraphQL queries into Cypher. I have written about that here. In addition to that, I hear rumors that GraphAware is using it, too. Well, they can be happy, we just removed all experimental warnings and released 2022.0.0 already.

I appreciate the feedback from Andreas Berger and Christophe Willemsen on that topic a lot. Thank you for being with me on that topic in 2021.

Quarkus and Neo4j

I do wear my “I made Quarkus 1.0” shirt with pride, and I am happy that the Neo4j-extension was part of Quarkus from release 1 up to 2.5.3.

It want be in 2.6.0 directly.

What?! i hear you scream… Be not afraid, it’s one of the first citizen in the Quarkiverse Hub. You’ll find it in quarkiverse/quarkus-neo4j and of course on code.quarkus.io and it learned so many new tricks this year, especially the Quarkus Dev-Services support for which I even created a video:

I fully support the decision to move extension to a separate org while retaining the close connection to the parent project, both via the orgs and the code generator. The discussion and the arguments are nothing but stellar, have a look at Quarkus #16870, Moving extensions outside of core repository.

My life as a maintainer of that extension is much easier in that form. A big shoutout to the people in the above discussion, especially to Guillaume Smet, George Gastaldi and also to Galder Zamarreño. It’s a pleasure working with you.

Neo4j-Migrations

My database refactoring toolkit for Neo4j, Neo4j-Migrations completely escalated in 2021. While it started off as a small shim to integrate SDN 6 into JHipster (btw, I’m still super happy about every encounter with Frederik and Matt), it now does a ton of things:

- Has CLI

- Is distributed as native packages for macOS, Linux and Windows

- Has a fully automated release process

- Has a quarkus extension

- Supports lifecycle callbacks

and more… which just got released as 1.2.2. The biggest impact on that project and my motivation has been made by Andres Almiray and JReleaser. Andres not just reached out to me to teach about JReleaser, he picked up my project, played with it, came up with a suggested workflow and we hacked together the missing pieces in an afternoon. Stunning.

If you find either my Neo4j-Migrations tooling or JReleaser useful, leave a star, or support Andres in a form that suites you.

More things

Similar to the way we created Neo4j support in Quarkus for a nice OOTB experience, Dmitry Alexandrov and me started writing a similar extension in Oracles Helidon.io project. I really, really appreciate that companies can work together in a positive way despite the fact that they are competitors in other areas.

Speaking about Oracle: Every single interaction with their GraalVM team has been just splendid. Thanks Alina Yurenko and team!

Thanks to Kevin Wittek we have been able to participate in the beta-testing of AtomicJars “Testcontainers Cloud, an absolutely lovely experience. I do see a bright future for the journey that Richard North and Sergei Egorov started.

There are many more people who’s input and feedback I appreciate a lot, not only this year, but previous and upcoming as well. Here a just a couple of them Gunnar Morling, knowledgable in so many ways and always fun talking with, Samuel Nitsche for input way beyond “just the tech” and surely Markus Eisele for always having an open ear.

Of course, there are even more. Remember, you all are valid. And more often than not, you do influence people, in some way or the other. I’m grateful to have a lot of excellent people in my life.

And with that, I sincerely hope that my first statement in this article will be just a bad pun and that 2022 will not be 2020, too and we can eventually safely meet in person again. Until then, stay safe and do create cool things. I still think that not all is fucked up, actually.

(Titel image by Vidar Nordli-Mathisen.)

Filed in English posts

|